Context, constraints, and execution

Each flagship project is framed as a concise case study: what needed to work, how the solution was approached, and what the final experience delivered.

Overview

This entry is important both as a media experiment and as a platform requirement for the site itself. It proves the portfolio can support self-hosted local video, not just external embeds, while documenting an early volumetric workflow built around more accessible tools.

Capture question

How close could small-team tooling get to workflows that previously required specialized facilities?

Technical proof

The page carries a local .webm asset as part of the project narrative, not as an afterthought.

Long-term value

It establishes a baseline for future XR applications or immersive film experiments.

Challenge

Volumetric media has spent years sitting between hype and real production feasibility. The challenge was understanding where the technology had actually become usable, and what parts of the workflow were finally accessible without a purpose-built capture facility.

Approach

The project was treated as practical R&D:

- test emerging capture hardware that had become available at a smaller scale

- evaluate playback and presentation quality from the perspective of future XR use

- document the work in a format that preserved both the media output and the capture context

Implementation

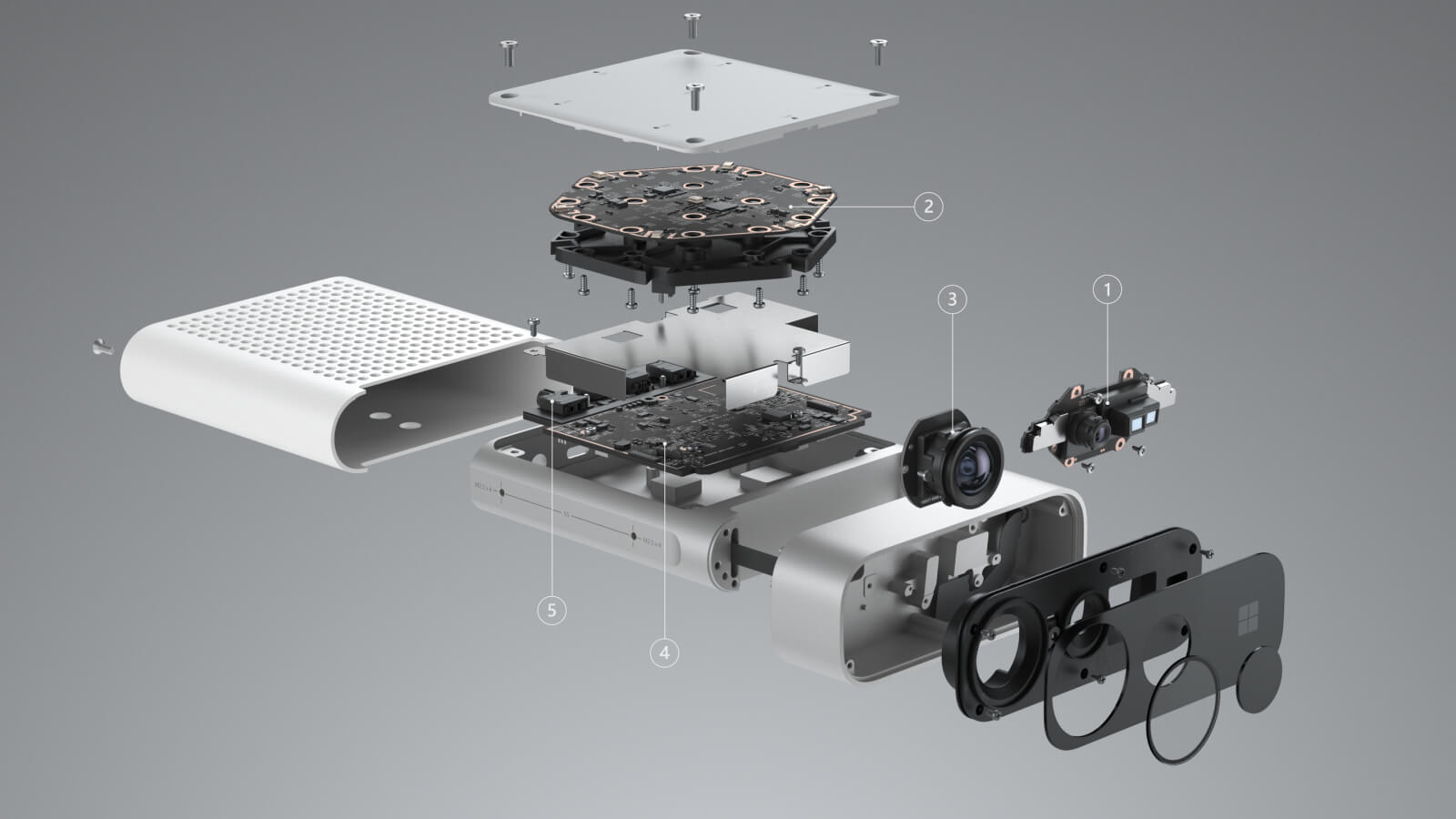

Volumetric video technology is growing rapidly and, like many emerging formats, it has gone through a branding crisis of its own. What was once an impossibly complex and expensive process requiring multi-million dollar dedicated facilities has started to become a moderately accessible workflow thanks to cutting-edge software and hardware such as Azure Kinect DK.

The local video included on this page captures that transition well: it is not just a concept piece, but evidence that the workflow could be packaged, reviewed, and shipped directly inside the portfolio without depending on a third-party host.

Media and capture setup

The gallery image supports the main video by showing part of the underlying capture environment. Together they make the page read more like a research snapshot than a generic reel entry.

Outcome

I’m excited to see how this area evolves, and I’m especially interested in applying the technology inside a future XR application or immersive VR film. Even as an exploratory piece, the project marks a clear moment when volumetric media started feeling reachable instead of purely institutional.

Selected visuals

Curated frames that support the implementation narrative and give each project a more deliberate editorial finish.